ANSWERS

Meet yourAI data team

Bruin answers, builds, and acts across all of your data points.

WHAT BRUIN DOES FOR YOU

In minutes. No follow-up needed.

BUILDS

Builds dashboards, from a single prompt.

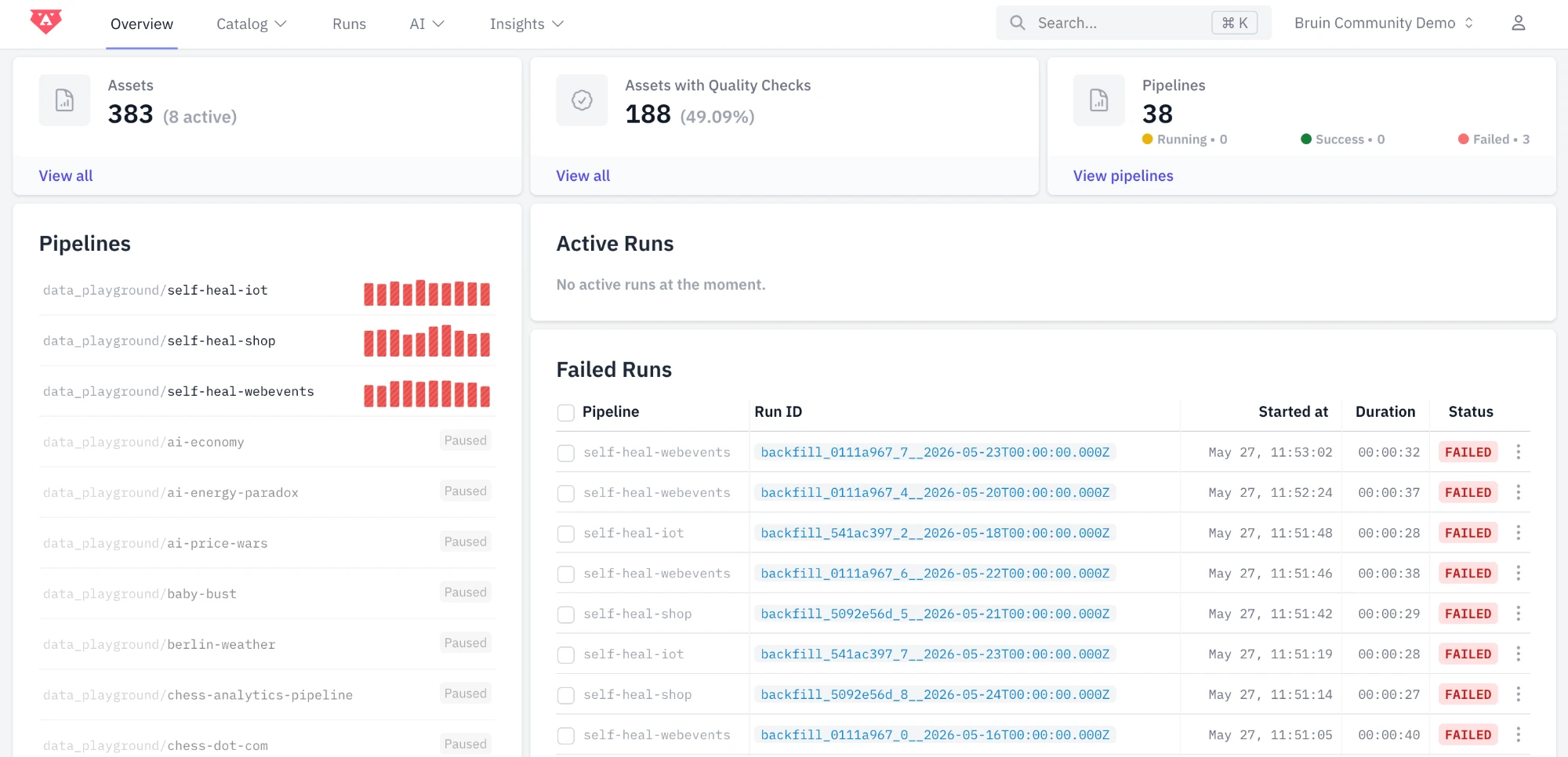

ACTS

Watches your data. Pauses, flags, fixes.

Morning team, here’s your brief:

- Campaign auto-paused. $1,847 saved.

- Reddit trend spotted.

UGH!

You’ve hired more people. You’ve added more tools. Still, you wait days for an answer, a decision, or anything to actually happen.

YOU DESERVE A BETTER DATA PLATFORM

From any source to any outcome

Bruin connects to 1000s of data sources and turns them into answers, dashboards, briefs, alerts, and auto-fixes.

Adjust

Adjust Shopify

ShopifyWHAT TO EXPECT

Other companies have already done the math.

WAIT, WHY NOT JUST ANY AI?

You can’t fix a broken stack with a chatbot.

You’ve uploaded CSVs to ChatGPT. You’ve blindly trusted code from Claude.

You know how that ends.

- Doesn’t know your sources or definitions. Hallucinates.

- Just answers. Your team verifies. Your team acts.

- One more tool on top of the seven you already have.

- Reads your live data on a real semantic layer. Cites the query, the source, the snapshot.

- Answers, builds, and acts. Across the full data lifecycle.

- Use all of it, or plug into your dbt, Airflow, and Looker stack.

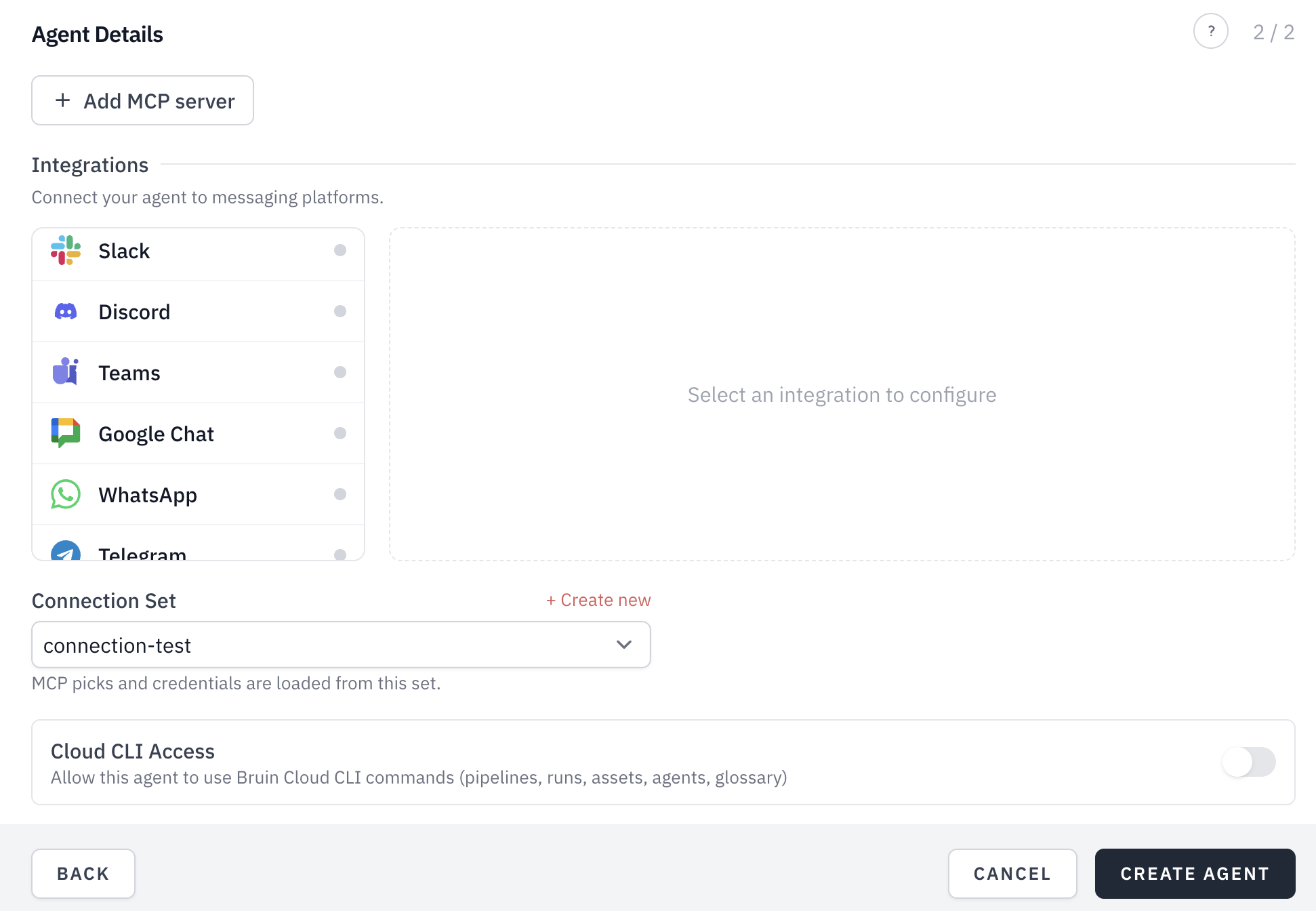

IMAGINE A DATA TEAM RUNNING 24/7

Your whole data stack and AI,

in one tool.

Now meet your team.

Two minutes to connect a warehouse and ask the first question.

Not sure where to start? See Bruin for your role →SECURITY & COMPLIANCE

Enterprise-Grade Security

SOC2 Type 2 certified with comprehensive security controls and audit capabilities.

The core is open. Inspect everything.

Run the Bruin CLI anywhere a binary runs. Self-host the whole stack, or layer the managed cloud on top for the AI analyst and dashboards. MIT licensed, no lock-in, no black box.

curl -LsSf https://getbruin.com/install/cli | shHear it from the companies already running on Bruin.

"Easy recommendation to give to any studio wanting to set up their data pipeline on a solid base. Data Engineers are expensive, today you don't need one if you run on Bruin... save money, save time!"

"With Bruin, what previously took hours can now be accomplished in just 15 minutes."

"I loved the answers from Bruin AI data analyst, it's exactly the type of contribution I hope to have from it. It got straight to the point."

"Thanks to Bruin, we have been able to automate all the manual parts of our data pipelines. We are able to focus on our business users' needs while delivering insights faster than ever before."

"Bruin's platform is incredibly intuitive. As a new team member, I was able to contribute effectively from day one."

"Partnering with Bruin has significantly increased our productivity, making it easy for our data team to manage everything seamlessly."

"Bruin strengthens our data infrastructure, boosting user acquisition accuracy and efficiency."

"Easy recommendation to give to any studio wanting to set up their data pipeline on a solid base. Data Engineers are expensive, today you don't need one if you run on Bruin... save money, save time!"

"With Bruin, what previously took hours can now be accomplished in just 15 minutes."

"I loved the answers from Bruin AI data analyst, it's exactly the type of contribution I hope to have from it. It got straight to the point."

"Thanks to Bruin, we have been able to automate all the manual parts of our data pipelines. We are able to focus on our business users' needs while delivering insights faster than ever before."

"Bruin's platform is incredibly intuitive. As a new team member, I was able to contribute effectively from day one."

"Partnering with Bruin has significantly increased our productivity, making it easy for our data team to manage everything seamlessly."

"Bruin strengthens our data infrastructure, boosting user acquisition accuracy and efficiency."

"Easy recommendation to give to any studio wanting to set up their data pipeline on a solid base. Data Engineers are expensive, today you don't need one if you run on Bruin... save money, save time!"

"With Bruin, what previously took hours can now be accomplished in just 15 minutes."

"I loved the answers from Bruin AI data analyst, it's exactly the type of contribution I hope to have from it. It got straight to the point."

"Thanks to Bruin, we have been able to automate all the manual parts of our data pipelines. We are able to focus on our business users' needs while delivering insights faster than ever before."

"Bruin's platform is incredibly intuitive. As a new team member, I was able to contribute effectively from day one."

"Partnering with Bruin has significantly increased our productivity, making it easy for our data team to manage everything seamlessly."

"Bruin strengthens our data infrastructure, boosting user acquisition accuracy and efficiency."