This is a short, hopefully fair comparison of the five data pipeline tools that show up in serious evaluations in 2026: Apache Airflow, Mage, Prefect, Dagster, and Bruin. It is built for engineers shortlisting tools, not for a long read.

Two things have changed since the last wave of pipeline tooling articles - the rise of agentic development (AI agents writing, fixing, and monitoring pipelines), and the shift toward self-healing pipelines and AI-driven troubleshooting. Both of those change which design decisions matter.

The author works at Bruin; the goal is to be useful, not promotional. Corrections welcome at [email protected].

The traditional comparison axes (scheduling, retries, DAG syntax) are mostly solved across all five tools. The decisions that move the needle today are:

- Scope - does the tool cover only orchestration, or also ingestion, transformation, quality, and lineage? Fewer vendors means fewer integrations to maintain and fewer surfaces an AI agent has to learn.

- Resource and complexity cost - how much platform engineering does it take to keep this running? With smaller data teams and more LLM-assisted work, operational overhead is the largest hidden cost.

- AI-readiness - is the pipeline definition something an agent can read, modify, and verify? Pipelines defined as readable text-first artifacts (SQL, YAML, typed Python) are easier for agents to work with than opaque UIs or imperative scripts spread across many files.

- Asset-centric vs task-centric - asset-centric models (define the tables you produce) give agents the structure they need for lineage-aware fixes and self-healing. Task-centric models (define the steps) are more general but require external systems for lineage and quality context.

- Quality and lineage as first-class - agents that fix pipelines need to know what good looks like. Built-in quality checks and column-level lineage are what makes self-healing possible without an external observability stack.

The shorthand: the platform an agent works on top of should be small in surface area, declarative where possible, and aware of the data it produces.

| Tool | Scope | Model | Lineage | Quality | Best language | Ops weight | Pricing |

|---|

| Airflow (3.2) | Orchestration | Task-centric, asset-aware | OpenLineage built in (provider) | External (Common SQL operators, dbt, Soda) | Python (Task SDK adds Go) | Heavy | Free OSS; MWAA from ~$350/mo, Composer 3 from ~$400/mo, Astronomer Astro $1.5k-5k+/mo |

| Prefect (3.7) | Orchestration | Task/flow-centric, assets via @materialize | Asset graph, built in | Asset checks, built in | Python | Medium | Free OSS; Hobby free cloud tier, paid Starter/Team/Pro |

| Dagster (1.13) | Orchestration + assets | Asset-centric (Components + dg CLI GA) | Asset graph built in; column-level for dbt (UI in Dagster+) | Asset checks (incl. partitioned) | Python | Medium | Free OSS; Dagster+ Solo $10/mo + $0.04/credit, Starter $100/mo + $0.035/credit |

| Mage | Orchestration + light transform + ingestion | Block-based | Built in | Built in (per-block) | Python + SQL + R | Light | Free OSS; Pro from $100/mo + $0.29 per CPU/RAM-hour |

| Bruin | Orchestration + ingestion + transform + quality + lineage | Asset-centric (YAML + SQL/Python/R) | Column-level, built in (OSS) | Built in (YAML) | SQL + Python + R | Light | Free OSS core; Bruin Cloud free tier ($100 credit + 50 AI analyst questions) + paid managed, VPC, on-prem |

One-line summaries:

- Airflow - the default Python orchestrator with the biggest ecosystem and the heaviest operational footprint. Airflow 3 (GA 2025, 3.2 in 2026) added asset-aware scheduling, asset partitioning, DAG versioning, multi-team deployments, and a Common AI Provider.

- Prefect - modern Python orchestrator with dynamic workflows, a lighter ops model, and a newer

@materialize asset layer with built-in asset checks. - Dagster - asset-centric orchestrator with lineage and quality as first-class concepts. Dagster 1.13 made Components and the

dg CLI GA, and shipped Compass (a Slack-native AI assistant). - Mage - block-based authoring for small teams that want a friendly entry point, with a

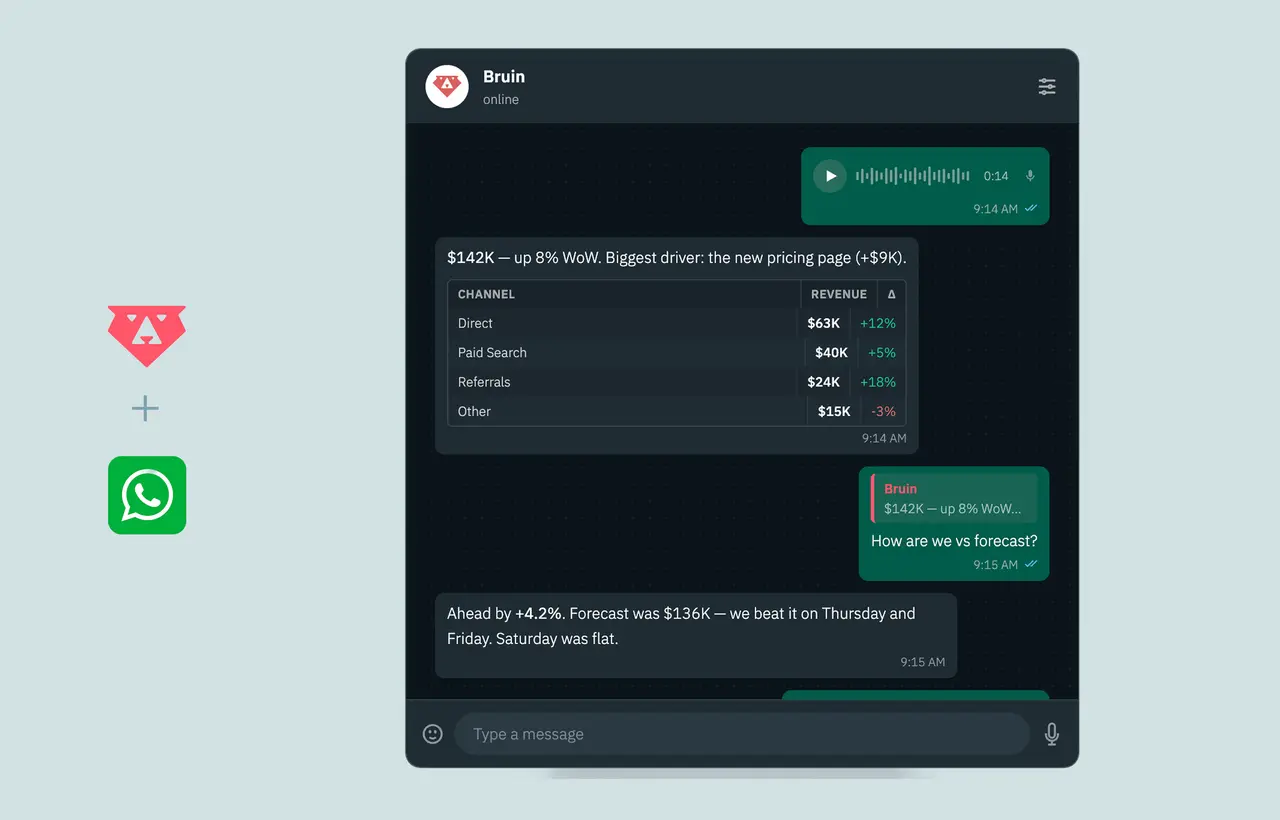

mage-agent CLI and MCP support for AI coding tools. - Bruin - unified pipeline platform (orchestration, ingestion, transformation, quality, column-level lineage) in one tool, with an MCP server for Cursor, Claude Code, and Codex.

Three patterns are reshaping how teams evaluate pipeline tools:

Agentic pipeline development. Agents write SQL, scaffold transformations, and propose new assets. They work best on platforms where the pipeline is described in plain text close to the code: SQL files, YAML asset definitions, typed Python. Tools that store significant logic in a UI or in opaque framework state make agentic workflows harder.

Self-healing pipelines. A self-healing pipeline detects a problem (schema drift, freshness anomaly, quality failure), proposes a fix (backfill, type cast, mapping rule), and either applies it or files a PR. This requires three things in the platform: declared quality checks at the asset level, column-level lineage so the agent can scope the blast radius, and a definition format an agent can safely edit. Tools where lineage and quality are external bolt-ons cannot self-heal without integrating multiple systems.

Agentic troubleshooting and monitoring. Instead of dashboards, teams are moving toward agents that read run logs, query the warehouse, and respond in Slack or Teams with the answer (and often a proposed fix). The tool's value here is in how much context it exposes - run metadata, lineage, quality history, asset descriptions - through stable interfaces an agent can call.

Practical implications for the shortlist:

- Airflow - the Common AI Provider (LLM operators, toolsets, 20+ model providers) and Human-in-the-Loop primitives in 3.1 give a real foundation for agent workflows. OpenLineage is built in. Quality and the broader data context still come from outside the tool, so self-healing remains an integration job.

- Prefect - good for agentic development of orchestration logic, and the new

@materialize asset layer plus asset checks bring lineage and quality closer to the platform. Prefect markets itself as AI infrastructure; self-healing patterns are typically built on top rather than shipped natively. - Dagster - assets, partitions, and asset checks give agents a structured surface to reason about. Compass (Slack-native AI assistant) and the

dagster-io/skills library for Claude Code / Codex make this a strong fit for agentic monitoring. Column-level lineage is auto-derived for dbt and viewable in the Dagster+ UI. - Mage - block authoring is friendly for humans, and the

mage-agent CLI plus MCP support let Cursor, Claude Code, and Codex create blocks, trigger runs, and inspect logs. - Bruin - assets defined in YAML next to SQL, Python, or R, with quality checks and column-level lineage in the same file, give agents a single readable surface for the whole pipeline. The Bruin MCP server (launched Nov 2025) connects Cursor, Claude Code, and Codex to navigate docs, run CLI commands, scaffold pipelines, and compare tables across environments.

None of this rules out a tool. It just changes how much glue you build around it.

For a team of five data engineers, ranked roughly by operational weight:

- Airflow self-hosted - heaviest. Metadata DB, scheduler, executor choice, workers, and now (in Airflow 3) a separate API server and DAG processor. The split architecture improves security and HA but adds components to deploy, monitor, and scale. Expect 0.5 to 1 FTE of platform engineering at scale.

- Dagster self-hosted - lighter, but still real. Code locations, daemons, partitions to manage. Components and

dg in 1.13 reduce boilerplate. - Prefect self-hosted - light. Workers and (on Kubernetes) the Helm chart-managed server and Postgres. OSS server lacks RBAC/SSO.

- Mage self-hosted - light at small scale, grows with pipeline count.

- Bruin self-hosted - lightest. The open-source CLI runs from CI/CD, GitHub Actions, or a single VM for many use cases.

Managed offerings (Astronomer Astro / MWAA / Google Cloud Composer, Dagster+, Prefect Cloud, Mage Pro, Bruin Cloud) remove most of this burden in exchange for predictable spend. For teams under 10 data engineers, managed almost always wins on total cost when engineering time is properly accounted for.

The biggest mistake greenfield teams make is buying the most well-known tool (usually Airflow) and discovering six months later that they have built a stack of five vendors around it. Optimize for fewer moving parts and faster iteration.

Recommended evaluation order:

- Decide your scope ambition. If you want one tool to own ingestion + transformation + quality + lineage, evaluate Bruin first. If you are happy running a managed ingestion vendor (Fivetran, Airbyte) plus dbt plus an orchestrator, evaluate Dagster and Prefect.

- Default to asset-centric. Greenfield teams benefit from the asset model (Dagster or Bruin) because it gives lineage and quality for free, and it is the friendliest surface for AI agents.

- Avoid self-hosted Airflow. Greenfield Airflow is rarely the right call in 2026 unless the team already has Airflow operational expertise and a platform engineer ready to maintain it. Astronomer or Composer is fine if Airflow is required for ecosystem reasons.

- Pick agent-readable formats. Plain SQL files, YAML, and typed Python all work; UI-driven block formats can box you in later.

Greenfield short list: Bruin if you want one tool. Dagster + dbt if you want asset-centric with separation of orchestration and transform. Mage if the team is small and a friendly visual editor matters more than scope.

Most teams reaching this article already run Airflow (often with Fivetran + dbt + a quality vendor). The question is which target reduces total complexity without forcing a full rewrite.

Recommended evaluation order:

- Inventory your real pain. Operational toil on Airflow? Vendor count? Quality and lineage gaps? Slow onboarding for new engineers and agents? Different pain points point to different targets.

- Decide if you are reducing scope or shifting it. Moving from Airflow to Prefect or Dagster shifts orchestration but keeps the rest of the stack. Moving to Bruin collapses multiple vendors into one.

- Use the freeze-old, build-new pattern. Leave existing Airflow DAGs running. Forbid new pipelines on the old platform. Over 12 to 18 months the old footprint shrinks to maintenance-only. This is far less risky than a big-bang migration.

- Pilot on one painful pipeline. Pick the pipeline that breaks the most and migrate just that one. Measure on-call hours, time-to-fix, and agent productivity before expanding.

Migration short list:

- Lighter Airflow with the same shape - Prefect (better Python ergonomics) or Astronomer (managed Airflow, same model).

- Better lineage and quality without changing scope - Dagster.

- Collapse the stack - Bruin.

A direct answer:

- Use Apache Airflow if - you already run it at scale with a dedicated platform team, you need the broadest operator ecosystem, or you need general workflow orchestration beyond data pipelines (ML training, infra automation). Airflow 3's asset-aware scheduling, DAG versioning, and Common AI Provider make it more competitive on a modern stack than the 2.x line was.

- Use Prefect if - your team is Python-first, you want modern orchestration ergonomics and dynamic workflows, and you are happy assembling ingestion, transformation, and quality from separate tools. Assets and asset checks via

@materialize close some of the gap with Dagster. - Use Dagster if - you are starting fresh on a modern stack, you want asset-centric pipelines with built-in lineage and asset checks, and you plan to keep dbt for transformation. Strong choice for ML and feature pipelines. Compass and the Components framework are worth a look in 1.13+.

- Use Mage if - you are a small or analytics-engineering-leaning team that values a friendly block-based authoring experience over breadth, and you want SQL, Python, and R blocks in the same pipeline.

- Use Bruin if - you want one tool for orchestration, ingestion, transformation, quality checks, and column-level lineage, with a definition format (YAML + SQL + Python + R) that AI agents can read and edit safely. Strongest fit for teams that want to keep vendor count low and support self-healing and agentic workflows on top of the same platform.

If you take only one decision rule from this article: pick the tool whose scope matches what you want to own. Everything else (syntax, hosting, pricing) is downstream of that choice.

Apache Airflow, Dagster, Prefect, Mage, and the open-source Bruin CLI are all installable in minutes for hands-on evaluation.