The mythical "data teams" have been living their prime time over the past few years. The first thing every company that is moving towards a data-driven culture does is to hire engineers for the things they wished they did well, without thinking why.

It's like the "DevOps" of 2010s: everyone claims they do it , but everyone understands the role –or the philosophy, for that matter– differently.

The whole story revolves around this idea that the lifecycle of data is too complex – it is! – for analysts/scientists to manage – not just for them, all of us! –, therefore we drop these engineers from outside into a highly complex business environment, expecting them to deliver value using data right away.

Of course, this never works: engineering teams spend months building infrastructure, restructuring the data, and building tooling for things that might not even be used by the rest of the business. In the end, the company is out millions of dollars, and likely worse than where they were before.

The idea that data would be dealt with outside the rest of the business by throwing more engineers on it is a very old idea. It often plays out as follows:

- The company builds software and becomes successful in some way.

- They generate a lot of data; although, not as a first-class citizen, always as an afterthought.

- There'll be a Jane in marketing, and a Joe in sales who knows how to pull some data to Excel and get some numbers out. The data and the story it tells are still an afterthought.

- The company grows further, but the "Jane"s and "Joe"s are not enough to serve the rest of the business. They decide to hire an analyst or two.

- Guess what? Data is still an afterthought.

- The analysts are trying to help the rest of the business, but are unable to keep up with all the demand and feel abandoned while trying to navigate the hot mess.

- At some point, some exec will get mad at not being able to get some numbers and call the shots to build a data team.

This is where things get nasty because an issue that is primarily a cultural matter is being tackled by the means of throwing people on it. The budgets are secured, the openings are posted, and the applications start flowing in, with no change in how to think about data.

The engineers that are hired jump straight into throwing solutions on problems that are not real problems, simply because that's what they can influence rather than what should be done. The ability to deliver results is euphoric, regardless of their impact. A complex infrastructure is built to move a CSV from Google Drive to S3, and the leaders feel accomplished: " look at all this cloud bill we have! we are definitely data-driven. "

Data, my friends, is still an afterthought.

The reason that companies struggle with shifting away from the data being an afterthought mindset is the top-down approach towards getting value out of data: data is not like cash that a leader can decide how to use the best at the moment; on the contrary, data is like oil. Not in the sense of " data is the new oil yay ", but in the sense that it needs a lengthy and expensive process to bring out its value, and that requires deep investment. You know people, they don't love deep investments.

The first step in this process is to treat the data as an asset, just as any other asset the company has, not something nice to have. The good use of data can propel the business way further than any accounting process could, or any lawyer that can protect the company, therefore it needs to be treated with the same importance. Do you leave your bookkeeping unattended? Then you should not leave your data as well. This needs to propagate the ranks: the data is incredibly valuable, no data should be wasted, and it should be treated with utmost care.

Once the importance of the data is clear to the rest of the business, then comes the first tangible action to take: data is not a separate entity from the software organization, make them own it.

I have seen it repeated countless times that data is being treated as if it is a separate thing from the rest of the software –and the team that builds it–, which means that there's a data analyst on one side trying to duct-tape a bunch of tables together in some weird drag-and-drop tool, while the software team just drops a full table from production the next day.

The organizations that have the healthiest data landscape are those that have a very clear understanding of data ownership: every bit of data that is produced/ingested/transformed/used must have an owner, no discussion. Do you own the service that writes to this database? You own the data. Do you own the internal events being generated on Kafka? You own the data. Do you join these billion different tables into a new table? You own the data.

The most important point here is that the software teams are aware that the data they produce is owned by them. This means that they will be responsible for a few core questions to be answered properly:

- How will this data be made available to the rest of the organization?

- How will the quality of this data be ensured?

- How would anyone notice if the data went corrupt? How quickly?

- What is the change management process around this data?

This means that the software teams will start treating their data just as they are treating their services. Not sure about your experience, but the quality of software services has been treated way higher than the quality of the data they produced in my experience, which means this is a win in my book.

There has been a large shift in the software world with the spread of concepts such as Domain-Driven Design , and the result ended up being domain-oriented services, owned by domain-focused teams, managing individual "products" of the larger product the company produces. This enabled ownership, independence, reliability, and more importantly the ability to deliver high-quality software quickly to become a competitive advantage. The data world is in for a similar transition.

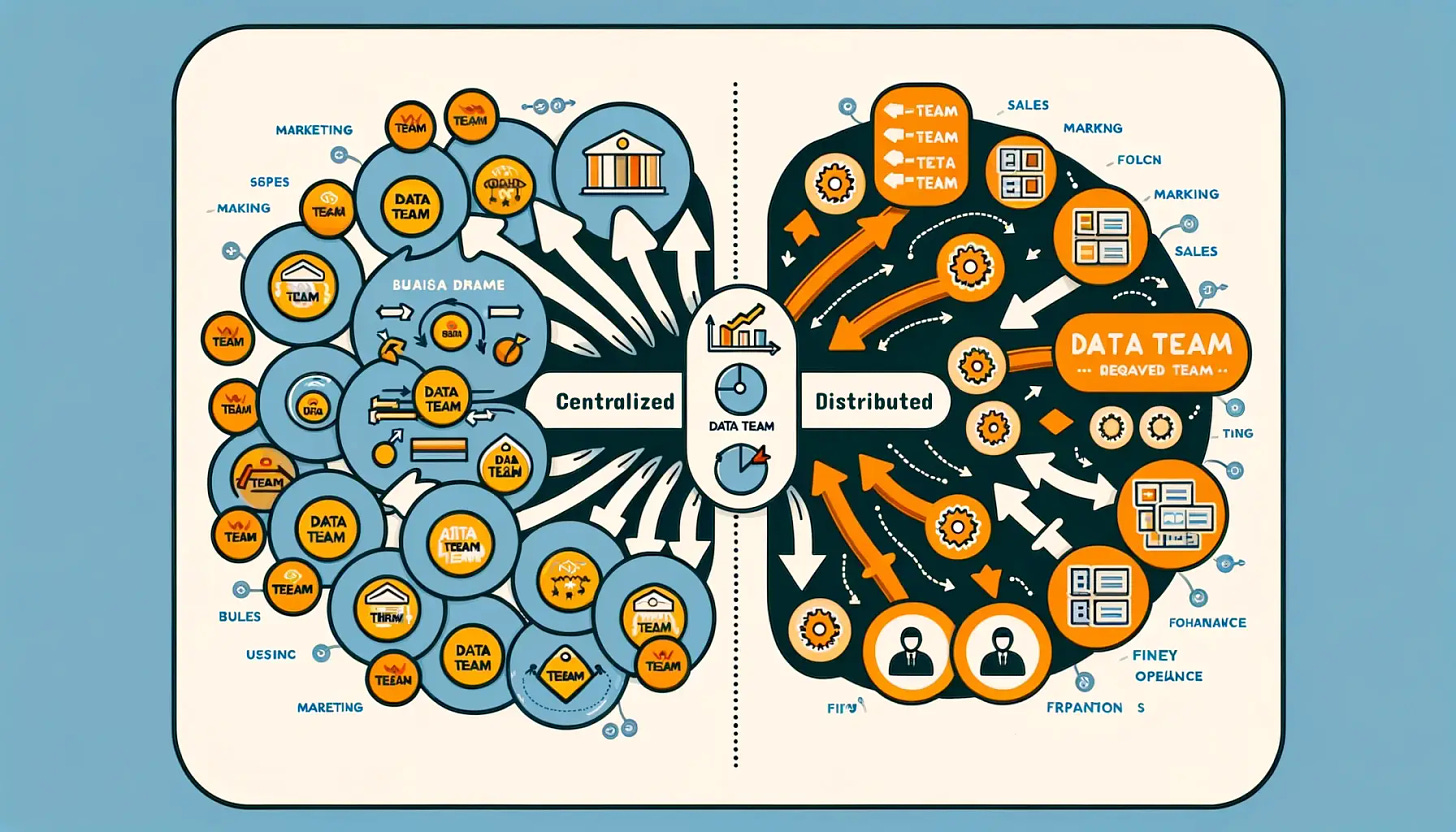

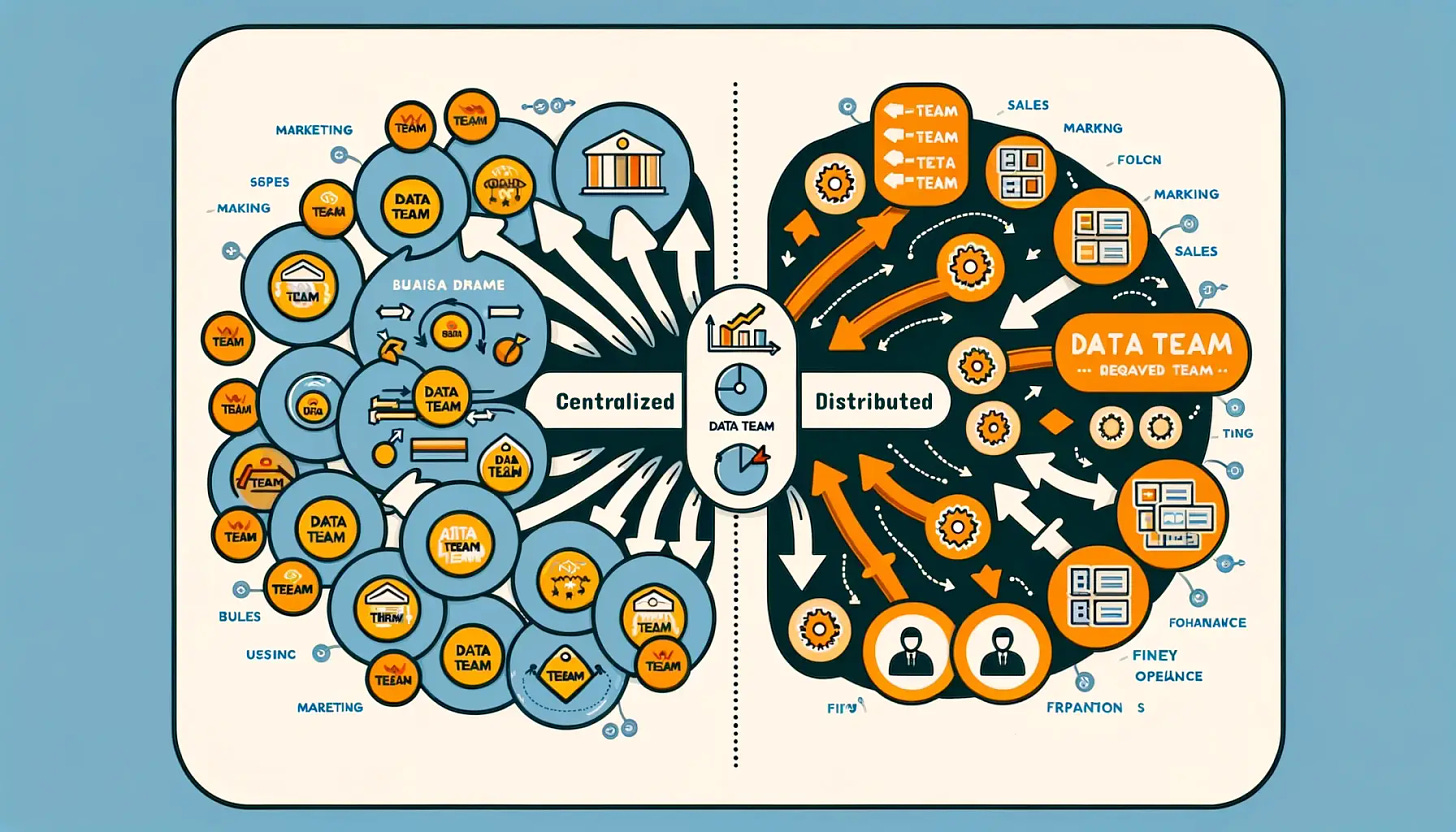

The data teams are in for a similar transition: the days of having a team called "data teams" are over. Any sufficiently large software team has noticed the drawbacks of having isolated functional teams, and instead transitioning towards having cross-functional, agile teams, and the same principle applies to data teams. Instead of having a siloed team that takes in the data everyone else in the company produces and tries to make sense of it, the data team should be distributed among the business teams.

The mindset of the business teams started shifting towards treating data as an end-to-end product, and the associated rise in quality that comes with that. The organization treats data as part of its core product, and applies the appropriate measures to building, changing, governing, and protecting it.

This requires a rethinking of the structure of the data team:

- The team is transparent and spread across the whole organization.

- The data people within the organization work very closely with the business and product teams, no more siloes.

- The data team needs fewer engineers, more analysts & scientists who understand the business context.

In the end, you do not have a central data team, you have an organization that speaks data on all levels, across all teams.

Shifting the whole data-as-an-afterthought mindset to making it a central beat of the company's heart is kind of like finally deciding to clean up that one junk drawer in your kitchen. You know it's going to be a mess, and it's way easier to just keep shoving stuff in there, but once you get it sorted, finding batteries or that one specific takeout menu becomes a breeze. It's all about making data not just something you do, but a part of who you are as a company.

The real shift in terms of value generated from the data will come once the organizations internalize this change in the mindset.