Incremental vs. Full Refresh Runs in Bruin

Learn how Bruin's interval start/end variables drive incremental runs, how --full-refresh changes the picture, how each behaves across SQL, Python, and ingestr assets, and how to protect critical tables with refresh_restricted.

Overview

Every Bruin run has an interval - a time window of data the run is responsible for, defined by two built-in variables:

start_date- inclusive lower bound of the windowend_date- exclusive upper bound of the window

The window tracks the pipeline's schedule one-for-one:

| Schedule | Run at | start_date | end_date |

|---|---|---|---|

@hourly | 2026-04-26 14:00 | 2026-04-26 13:00 | 2026-04-26 14:00 |

@daily | 2026-04-26 00:00 | 2026-04-25 | 2026-04-26 |

0 */6 * * * (every 6 hours) | 2026-04-26 18:00 | 2026-04-26 12:00 | 2026-04-26 18:00 |

Bruin computes these values at runtime and injects them directly into your assets - never hard-code them. The mechanism depends on the asset type:

| Asset type | How the interval is injected |

|---|---|

| SQL | Jinja templating - reference {{ start_date }} / {{ end_date }} in your query |

| Python | Environment variables - read os.environ["BRUIN_START_DATE"] / os.environ["BRUIN_END_DATE"] |

| ingestr | CLI flags Bruin passes to ingestr automatically - no wiring needed |

Use the interval to filter source data so each run only processes the rows in its window - this is the foundation of incremental loading in Bruin.

Run modes

Three different things in Bruin are called start_date - distinguish them before reading the table:

start_date | Where it lives | Role |

|---|---|---|

| Pipeline default | start_date in pipeline.yml | Earliest point full-refresh will rewind to |

| Schedule-derived runtime | Computed by Bruin per scheduled run | Start of the previous schedule interval, set automatically |

| Manual runtime | --start-date CLI flag | Ad-hoc override for backfills |

These three sources map directly to the three ways a run can be triggered:

| Command | Runtime start_date | Runtime end_date | When to use |

|---|---|---|---|

bruin run --full-refresh | pipeline default start_date | now | First run, or full rebuild |

bruin run | schedule-derived (start of previous interval) | schedule-derived (start of current interval) | Regular scheduled runs |

bruin run --start-date X --end-date Y | manual (X) | manual (Y) | Manual backfills of a specific window |

These rules are schedule-agnostic - they apply identically to @hourly, @daily, @weekly, @monthly, or any cron expression. The window simply tracks the schedule cadence one-for-one (hourly schedule → one-hour window, weekly → one-week, and so on).

The classic "load history once, then incrementals" pattern falls straight out of this: trigger the first run with --full-refresh to absorb history from the pipeline default start_date, then let the schedule take over.

Interval modifiers

To catch late-arriving events or add a lookback/lookahead on top of the runtime interval, use interval modifiers - they shift start_date and end_date by a fixed offset. Set them on the asset (overrides) or the pipeline (default):

# Asset-level: shift this asset's window back 2 hours

interval_modifiers:

start: -2h

end: 0h

# Pipeline-level: applies to every asset that doesn't override it

default:

interval_modifiers:

start: -1d

end: 0h

Modifiers only kick in when the run is invoked with --apply-interval-modifiers, so it's an opt-in per run.

Using intervals in assets

Built-in variables are exposed via Jinja templating. Use them in your WHERE clause:

-- assets/contact_events.sql

/* @bruin

name: raw.contact_events

type: bq.sql

materialization:

type: table

strategy: time_interval

incremental_key: event_date

time_granularity: date

@bruin */

SELECT

contact_id,

event_type,

event_date,

account_id

FROM `external.crm_events`

WHERE event_date >= '{{ start_date }}'

AND event_date < '{{ end_date }}'

strategy: time_interval deletes existing rows in the interval before inserting the new batch - safe to re-run any window without duplicates. Other supported strategies: create+replace, append, merge, delete+insert (full reference).

Preview the compiled query with bruin render assets/contact_events.sql to see the actual injected dates.

Full refresh and refresh_restricted

--full-refresh does two things at once: expands the interval back to the pipeline start_date, and drops/recreates tables instead of appending or merging.

The strategy-by-strategy behavior:

| Strategy | Default run | --full-refresh |

|---|---|---|

create+replace | full overwrite every run | full overwrite (same) |

append | inserts new rows for the interval | drops + reinserts everything |

merge | upserts rows in the interval | drops + reloads from start_date |

delete+insert | deletes interval, reinserts | drops + reloads from start_date |

time_interval | deletes interval window, reinserts | drops + reloads from start_date |

Protecting tables with refresh_restricted

Some tables must never be dropped on --full-refresh - manually backfilled dimensions, tables with external dependencies, or slow-to-rebuild aggregates whose source has rotated out.

Set refresh_restricted: true in the asset's materialization block to opt out of full-refresh drops:

# assets/dim_customer.sql

/* @bruin

name: warehouse.dim_customer

type: bq.sql

materialization:

type: table

strategy: merge

refresh_restricted: true

columns:

- name: customer_id

type: integer

primary_key: true

@bruin */

SELECT customer_id, name, last_seen_at

FROM raw.customers

WHERE updated_at >= '{{ start_date }}'

AND updated_at < '{{ end_date }}'

The asset now behaves like a regular incremental run no matter how the pipeline is invoked - the table on disk is never replaced, only updated by its declared strategy.

To rebuild a restricted asset, flip the flag to false, run, then flip it back. That makes the destructive intent explicit in version control rather than a hidden side effect of how the run was triggered.

Best practices

- Always interval-bound

WHEREclauses -WHERE ts >= '{{ start_date }}' AND ts < '{{ end_date }}'. Open-ended filters defeat the purpose of intervals - Use

<(not<=) onend_date- it's exclusive;<=causes overlap with the next run - Pick the right strategy for re-runs -

time_intervalanddelete+insertmake windows idempotent;appenddoes not - Keep filter window = delete window -

time_intervalanddelete+insertonly delete rows in the runtime interval, so a query that reaches further back inserts rows it didn't delete. Example:@daily+time_intervaldeletes 1 day, butWHERE ts >= start_date - 3 daysinserts 4 - that's 3 duplicates. Extend the window with interval modifiers, not theWHEREclause - In Python, branch on

BRUIN_FULL_REFRESH- checkos.environ.get("BRUIN_FULL_REFRESH") == "1"to pick between the full-history path and the incremental-window path, keeping both in one asset - Set

refresh_restricted: trueon hand-curated tables - prevents accidental drops during pipeline-wide full refresh - Set a sensible pipeline

start_date- it bounds how far back full-refresh will go - Render before you run -

bruin rendershows the exact dates Bruin will inject so you catch off-by-one mistakes early

Helpful links

More tutorials

Chat with an AI Agent

Use Bruin Cloud's chat to ask an AI agent about your data, generate reports, and run Bruin Cloud CLI tasks like pipeline status and history.

Configure AI Agents

Create and configure AI agents in Bruin Cloud - pick a project, add messaging integrations, attach a connection set, and set permissions.

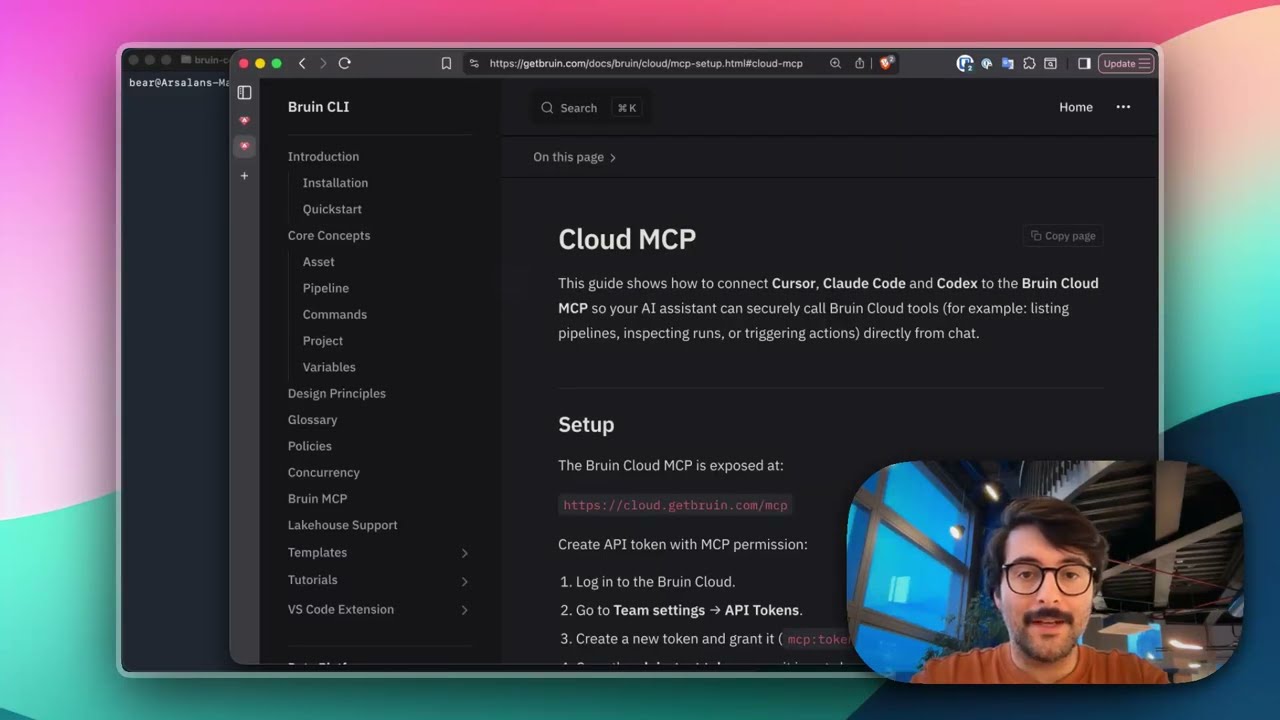

Connect Bruin Cloud MCP to Claude Code

Set up the Bruin Cloud MCP so your AI agent can query pipelines, inspect runs, and trigger actions in Bruin Cloud directly from your terminal.