Materializing Python Assets into Your Warehouse

Return a DataFrame, let Bruin handle the rest. Learn how to use Python materialization to load data into BigQuery, Snowflake, Postgres, and more - with support for merge, append, and incremental strategies.

Overview

Goal - Use Python materialization to turn any Python script that returns a DataFrame into a loaded, managed table in your data warehouse. No manual to_sql, no credential wiring, no duplicate-handling code.

Audience - Data engineers who want Python assets that behave like SQL assets: typed columns, merge/append/incremental strategies, and quality checks - all driven by the asset's YAML config.

Prerequisites

- Bruin CLI installed

- A Bruin project with a configured warehouse connection (BigQuery, Snowflake, Postgres, Redshift, MSSQL, MySQL, DuckDB, etc.)

- Familiarity with Python assets - see Using the Bruin Python SDK if you're new to them

What is Python materialization?

In a regular Python asset, you write code and handle storage yourself. With materialization, you return a DataFrame from a materialize() function and Bruin:

- Runs your script

- Saves the output to a temporary Apache Arrow file

- Uses

ingestrto load it into the destination with your chosen strategy - Cleans up the temporary file

This is how SQL assets already work. Materialization brings the same model to Python: define the target, describe the columns, return the data.

Materialization is a newer feature. It's stable for production use but if you hit an edge case, open an issue.

Steps

1) Anatomy of a materialized Python asset

Here's the minimal shape:

"""@bruin

name: tier1.users

image: python:3.13

connection: my_bigquery

materialization:

type: table

strategy: create+replace

columns:

- name: id

type: integer

primary_key: true

- name: name

type: string

@bruin"""

import pandas as pd

def materialize():

return pd.DataFrame({

"id": [1, 2, 3],

"name": ["Alice", "Bob", "Charlie"],

})

Three things to notice:

- The

@bruinblock declares aconnection(required whenmaterialization.typeistable) and amaterializationblock with atypeandstrategy - The function is named

materialize()- Bruin looks for it specifically - The return value is a DataFrame. Bruin handles the rest

2) Return types supported

materialize() can return any of:

- pandas DataFrame

- polars DataFrame

- list of dicts - e.g.

[{"id": 1, "name": "Alice"}, ...]

Pick whichever fits your upstream code. Polars is great for larger datasets; pandas has the widest ecosystem; a list of dicts is fine for small API results.

3) Choose a materialization strategy

Python assets support four strategies. The time_interval strategy is not supported for Python - use delete+insert with an incremental_key instead if you need time-bucketed incremental loads.

create+replace - full refresh

Rebuilds the table on every run. Simple, predictable, and expensive for large tables.

materialization:

type: table

strategy: create+replace

append - add only

Inserts new rows, never removes. Use for audit logs or event streams where history must be preserved.

materialization:

type: table

strategy: append

delete+insert - replace by key

Deletes rows matching your incremental key's distinct values, then inserts the new batch. Use for reprocessing a specific partition (e.g. "today's rows").

materialization:

type: table

strategy: delete+insert

incremental_key: event_date

merge - upsert

Updates existing rows and inserts new ones based on primary_key. Use when upstream records can change and you need to keep the latest version.

materialization:

type: table

strategy: merge

columns:

- name: id

type: integer

primary_key: true

- name: name

type: string

update_on_merge: true

merge is the most common production strategy and is supported on BigQuery, Snowflake, Postgres, MSSQL, MySQL, Athena (Iceberg), Databricks, ClickHouse, Trino, and DuckDB (limited).

4) Enforce column types

By default, types are inferred from the DataFrame. To enforce them at the destination, set parameters.enforce_schema: true:

"""@bruin

name: tier1.users_api

image: python:3.13

connection: my_bigquery

materialization:

type: table

strategy: merge

parameters:

enforce_schema: true

columns:

- name: id

type: integer

primary_key: true

- name: name

type: string

- name: email

type: string

- name: created_at

type: timestamp

@bruin"""

import pandas as pd

def materialize():

return pd.DataFrame({

"id": [1, 2, 3],

"name": ["Alice", "Bob", "Charlie"],

"email": ["[email protected]", "[email protected]", "[email protected]"],

"created_at": pd.to_datetime(["2026-01-01", "2026-01-02", "2026-01-03"]),

})

5) Add quality checks

Materialized Python assets get the same column-level checks as SQL assets:

columns:

- name: id

type: integer

primary_key: true

checks:

- name: unique

- name: not_null

- name: email

type: string

checks:

- name: not_null

After the load, Bruin runs the checks and fails the asset if any fail.

6) Declare column-level lineage

Link columns back to their upstream sources so lineage shows up correctly in the UI:

columns:

- name: id

type: integer

upstreams:

- table: raw.users

column: user_id

7) Combine materialization with the Python SDK

Materialization handles the write. The Bruin Python SDK handles the read and everything in between. They compose naturally:

"""@bruin

name: analytics.active_users

image: python:3.13

connection: my_bigquery

materialization:

type: table

strategy: merge

parameters:

enforce_schema: true

columns:

- name: id

type: integer

primary_key: true

- name: name

type: string

update_on_merge: true

- name: last_seen_at

type: timestamp

update_on_merge: true

@bruin"""

from bruin import query, context

def materialize():

if context.is_full_refresh:

return query("SELECT id, name, last_seen_at FROM raw.users WHERE active = true")

return query(f"""

SELECT id, name, last_seen_at

FROM raw.users

WHERE active = true

AND last_seen_at BETWEEN '{context.start_date}' AND '{context.end_date}'

""")

Here the SDK reads from the upstream table (with date filtering via context) and materialization writes the result into analytics.active_users using merge.

8) Run it

bruin run assets/my_python_asset.py

For a full rebuild:

bruin run --full-refresh assets/my_python_asset.py

For a specific date window (use with delete+insert):

bruin run --start-date 2026-04-01 --end-date 2026-04-23 assets/my_python_asset.py

How it works under the hood

Bruin uses Apache Arrow as the bridge between Python and the warehouse. On each run:

- Dependencies from your asset's

requirements.txtare installed viauv - Your

materialize()function runs - The return value is serialized to a temporary Arrow file

ingestrloads the file into the destination with your chosen strategy- The temporary file is deleted

This means your Python code never needs warehouse credentials directly - ingestr handles the load using the connection you declared in the @bruin block.

Key takeaways

- Declare

materialization.type: tableplus astrategyand Bruin loads your DataFrame for you - Supported strategies:

create+replace,append,merge,delete+insert.time_intervalis not supported for Python - Return a pandas DataFrame, polars DataFrame, or list of dicts from

materialize() - Pair materialization with the Bruin Python SDK to read, transform, and write with almost no boilerplate

- Column-level checks, lineage, and schema enforcement all work the same as SQL assets

Helpful links

More tutorials

Chat with an AI Agent

Use Bruin Cloud's chat to ask an AI agent about your data, generate reports, and run Bruin Cloud CLI tasks like pipeline status and history.

Configure AI Agents

Create and configure AI agents in Bruin Cloud - pick a project, add messaging integrations, attach a connection set, and set permissions.

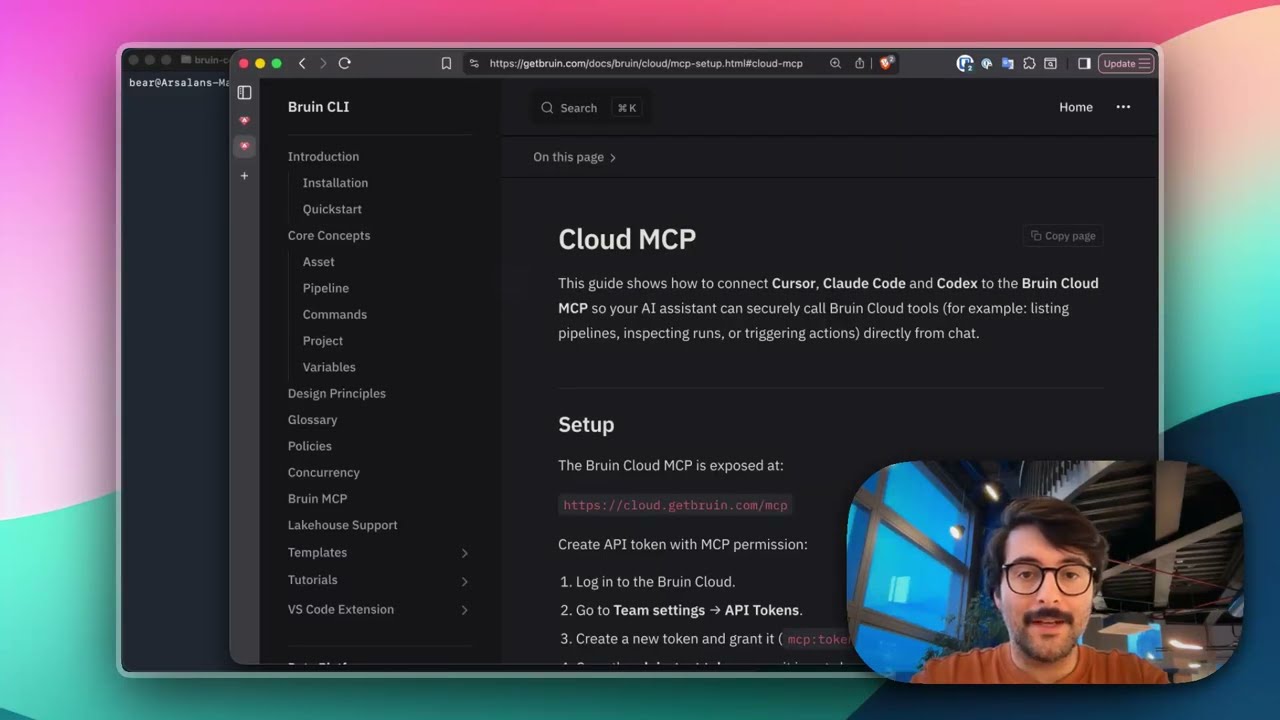

Connect Bruin Cloud MCP to Claude Code

Set up the Bruin Cloud MCP so your AI agent can query pipelines, inspect runs, and trigger actions in Bruin Cloud directly from your terminal.